The fast inverse square root is a clever algorithm that approximates 1/sqrt(x). It became famous when the Quake III source code was made public around 2005. While it was initially attributed to Carmack, he denied having written it. Its origins aren’t completely clear and they can be traced back way before Quake III was launched in 1999.

float fastInvSqrt(float x) {

float xhalf = 0.5f * x;

int i = *(int*)&x; // evil floating point bit level hacking

i = 0x5f3759df - (i >> 1); // what the fuck?

x = *(float*)&i;

x = x*(1.5f-(xhalf*x*x));

return x;

}

Lots of people have written about this hack and explained how it works. There are even mathematics papers that study the magic number 0x5f3759df alone. Many people have also asked if it’s still useful on modern processors. The most common answer is no, that this hack was useful 20+ years ago and that modern-day processors have better and faster ways to approximate the inverse square root.

We’ll put those claims to the test in this post.

How good an approximation is it?

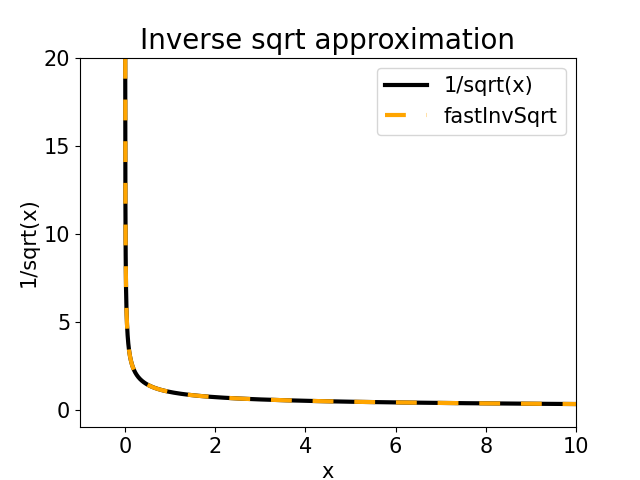

It has been calculated that the fastInvSqrt approximates with a relative error of the order of 0.1%. If we graph it against the computer calculated 1/sqrt(x) value, we will get almost identical curves.

Benchmarking program

To evaluate the performance, we will calculate the square root of all positive float values in a loop.

/* profiling.c */

#include <limits.h>

#include <math.h>

float fastInvSqrt(float x);

float invSqrt(float x);

int main(int argc, char *argv[]) {

float result;

float (*f)(float);

if (argc != 2)

return 1;

if (*argv[1] == 'f')

f = fastInvSqrt;

else if (*argv[1] == 'i')

f = invSqrt;

else

return 1;

int i;

for (i=1; i < INT_MAX; i++) {

float x = *(float *)&i;

result = f(x);

}

return result;

}

float fastInvSqrt(float x) {

float xhalf = 0.5f * x;

int i = *(int*)&x;

i = 0x5f3759df - (i >> 1);

x = *(float*)&i;

x = x*(1.5f-(xhalf*x*x));

return x;

}

float invSqrt(float x) {

return 1/sqrt(x);

}

A command-line argument, either f or i, will be used to select the fastInvSqrt approximation or a regular 1/sqrt(x) in the loop.

Inspecting the generated assembly code

Let’s check the generated assembly code for both functions: fastInvSqrt and invSqrt. Both of them were generated using the gcc -Og flag, which will be the tested version.

The fastInvSqrt assembly code is almost a direct translation of the C code. We can see there are three multiplication instructions (mulss), which might determine the critical path for this algorithm.

fastInvSqrt: movaps %xmm0, %xmm1 # %xmm1 = x mulss .LC0(%rip), %xmm1 # %xmm1 = 0.5f * x movd %xmm0, %eax # %eax = x (same bytes, but as integer) sarl %eax # %eax = i >> 1 movl $1597463007, %edx # %edx = 0x5f3759df subl %eax, %edx # %edx = 0x5f3759df - (i >> 1) movd %edx, %xmm0 # %xmm0 = %edx bytes as float mulss %xmm0, %xmm1 # %xmm1 = xhalf * x mulss %xmm0, %xmm1 # %xmm1 = xhalf * x * x movss .LC1(%rip), %xmm2 # %xmm2 = 1.5f subss %xmm1, %xmm2 # %xmm2 = 1.5f - (xhalf*x*x) mulss %xmm2, %xmm0 # %xmm0 = x*(1.5f-(xhalf*x*x)) ret # return %xmm0 .LC0: .long 1056964608 # 0x3f000000 (0.5f) .align 4 .LC1: .long 1069547520 # 0x3fc00000 (1.5f)

The simple 1/sqrt(x) C statement is translated into several assembly instructions. We can see that even though we’re operating on afloat datatype, the machine converts it to double and then back to float to generate the results. Besides the sqrt function call, which we will leave as a black-box, there’s a division instruction (divsd), which might be the limiting step to calculate the inverse square root.

invSqrt: cvtss2sd %xmm0, %xmm0 # xmm0 = (double) x call sqrt@PLT # xmm0 = sqrt (x) movsd .LC0(%rip), %xmm1 # %xmm1 = 1 (0x3ff0000000000000) divsd %xmm0, %xmm1 # %xmm1 = 1 / sqrt(x) pxor %xmm0, %xmm0 # %xmm0 = 0 cvtsd2ss %xmm1, %xmm0 # %xmm0 = (float) %xmm1 ret # return %xmm0 .LC0: .long 0 # 0x00000000 .long 1072693248 # 0x3ff00000

Benchmarking results

The profiling program was compiled as:

$ gcc -Og -o main profiling.c -lm

The performance was evaluated using perf_events. An Intel Core i5 2500K was used for the tests.

First, the fast inverse square root approximation:

$ perf stat ./main f

Performance counter stats for './main f':

6.217,04 msec task-clock # 0,997 CPUs utilized

996 context-switches # 0,160 K/sec

0 cpu-migrations # 0,000 K/sec

60 page-faults # 0,010 K/sec

22.497.523.463 cycles # 3,619 GHz

5.831.547.158 stalled-cycles-frontend # 25,92% frontend cycles idle

3.229.721.280 stalled-cycles-backend # 14,36% backend cycles idle

40.828.928.629 instructions # 1,81 insn per cycle

# 0,14 stalled cycles per insn

6.448.514.878 branches # 1037,233 M/sec

263.848 branch-misses # 0,00% of all branches

6,235267619 seconds time elapsed

6,198586000 seconds user

0,019982000 seconds sys

It took about 6 seconds. The low percentage of stalled cycles (25%) and the instructions per cycle rate greater than one (1.81), indicate that the processor was able to pipeline the instructions without too much blocking.

Next, the regular 1/sqrt(x) calculation.

perf stat ./main i

Performance counter stats for './main i':

26.463,06 msec task-clock # 0,997 CPUs utilized

4.256 context-switches # 0,161 K/sec

0 cpu-migrations # 0,000 K/sec

60 page-faults # 0,002 K/sec

96.271.576.226 cycles # 3,638 GHz

78.085.953.636 stalled-cycles-frontend # 81,11% frontend cycles idle

59.599.245.094 stalled-cycles-backend # 61,91% backend cycles idle

53.797.950.617 instructions # 0,56 insn per cycle

# 1,45 stalled cycles per insn

17.205.136.657 branches # 650,157 M/sec

1.089.687 branch-misses # 0,01% of all branches

26,538942054 seconds time elapsed

26,404007000 seconds user

0,063980000 seconds sys

Close to 26 seconds. We have more instructions, but the greatest problem is the high percentage of stalled cycles. The low instructions per cycle rate (0.56) also indicates poor performance. In this case, the processor is blocked by costly operations, maybe the division instruction.

Surprisingly, the 20+ years old fast inverse square root approximation was able to outperform a regular 1/sqrt(x) calculation by a factor of 4x.

Should we still use the fast inverse square root?

While the results above might indicate that we could, the tests were not fair. The fast inverse square root algorithm returns an approximate result. On the other hand, 1/sqrt(x) is exact to the extent of floats precision.

In order to correct this fault, we’ll compare the fastInvSqrt approximation with another approximation, the rsqrtss instruction of modern processors.

[rsqrtss] Computes an approximate reciprocal of the square root of the low single-precision floating-point value in the source operand.

The relative error for this approximation is:

Intel® 64 and IA-32 architectures software developer’s manual

|Relative Error| ≤ 1.5 ∗ 2^−12

We have a relative error of the order of 0.01%. Even more precise than fastInvSqrt‘s 0.1%.

The following program will be used to test it.

/* invSqrt_rsqrt.c */

#include <limits.h>

float rsqrt(float x);

int main() {

float result;

for (int i = 1; i < INT_MAX; i++) {

float x = *(float *)&i;

result = rsqrt(x);

}

return result;

}

The rsqrt function was written in assembly (to be included in the C program):

# file rsqrt.s

.globl rsqrt

rsqrt: # float rsqrt(float x)

pxor %xmm1, %xmm1 # set %xmm1 to 0

movss %xmm0, %xmm1 # %xmm1 = x

rsqrtss %xmm1, %xmm0 # %xmm0 1/sqrt(x) approximation

ret # return %xmm0

An executable was generated like so:

$ gcc -Og -o main_rsqrt invSqrt_rsqrt.c rsqrt.s

And, finally, the performance results:

$ perf stat ./main_rsqrt

Performance counter stats for './main_rsqrt':

4.213,01 msec task-clock # 0,999 CPUs utilized

402 context-switches # 0,095 K/sec

0 cpu-migrations # 0,000 K/sec

47 page-faults # 0,011 K/sec

15.072.163.336 cycles # 3,578 GHz

6.476.764.013 stalled-cycles-frontend # 42,97% frontend cycles idle

31.495.391 stalled-cycles-backend # 0,21% backend cycles idle

21.483.118.113 instructions # 1,43 insn per cycle

# 0,30 stalled cycles per insn

8.591.579.921 branches # 2039,297 M/sec

46.127 branch-misses # 0,00% of all branches

4,216290125 seconds time elapsed

4,213955000 seconds user

0,000000000 seconds sys

It took about 4 seconds. While the percentage of stalled cycles was a bit high (42%), the number of instructions was halved. Overall, it runs 30% faster than fastInvSqrt!

While this is already an improvement over the original approximation, we could get even faster results using SIMD instructions. The VRSQRTPS instruction, for example, can calculate the approximate of 1/sqrt(x) of 8 float values at a time. If it’s as fast as thersqrtss instruction, that would make it 10x faster than the original fastInvSqrt approximation.

Using -Og causes the compiler to emit a call to sqrt, which makes the whole benchmark not very useful. What would be much more useful is comparing at -O2, so that the compiler can inline sqrt into a single instruction.