Computers represent all of its data using the binary system. In this system, the only possible values are 0 and 1, binary digits, which are also called bits. The demonstration below shows the bit-level representation of some common integer data-types in C.

In this post, we’ll see how to understand the encoding used by computers to represent integer values. Both unsigned and signed representations will be explained. Additionally, we’ll analyze the range of values that can be represented with a certain quantity of bits. Finally, we’ll take a closer look at some of the integer data-types used in C, which are similar to those used in many other programming languages.

Unsigned values

We’ll start by analyzing how to represent positive values and zero. These are referred to as unsigned integer types.

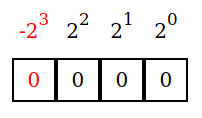

To represent numbers in general and unsigned values in particular, computers use arrays of bits. For demonstration purposes, we’ll use a 4-bits array.

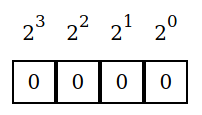

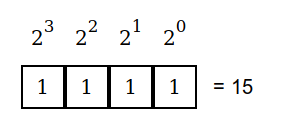

Each one of these bits has a weight of increasing powers of 2.

Counting the bits starting from zero, we’ll have that the 0th bit (rightmost bit) has a weight of 20=1. The one that follows has a weight of 21=2, and so on. In general, the ith bit has a weight of 2i.

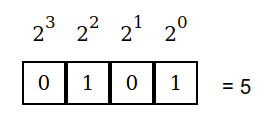

Each bit tells us whether or not to include its weight in the number that is encoded. When a bit equals 0, we don’t include its weight. When it equals 1, we add its corresponding weight to the total. The value encoded by these bits is equal to the sum of all the weights of the bits set to 1.

On the example above, the 0th and the 2nd bit are set to 1. It follows that the number that is encoded is 20 + 22 = 1 + 4 = 5.

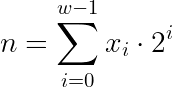

In mathematical terms, the encoded value n represented by an array of unsigned w bits is equal to:

Where xi is the ith bit.

Signed values

signed data types refer to those that can represent negative values as well as positive ones. The most common way to do it is using two’s complement encoding.

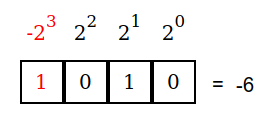

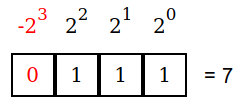

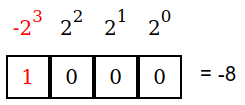

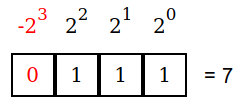

This encoding is very similar to the one that we saw for unsigned values. The only difference is that the most significant bit (the one with the greatest weight), has a negative value. We’ll call it the sign-bit. In our 4-bit representation, we’ll have:

Whenever the sign-bit is set to 1, we’ll have a negative number.

In the example above, both the 3rd (the sign-bit) and the 1st bit are set to one. The value represented is then -23 + 21 = -8 + 2 = -6.

We’ll get positive values when the sign-bit is set to 0.

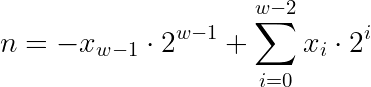

In mathematical terms:

Ones’ complement

While two’s complement is the most common encoding for signed integers, some machines use an alternative encoding: ones’ complement. In this encoding, the sign-bit has a weight of -2w-1-1 instead of -2w-1. This has the effect of making the range of possible values symmetrical. As a particularity, there are two possible ways to represent zero in this encoding.

We’ll focus on the two’s complement notation for the rest of this post.

Range of values

Now that we have seen how numbers are encoded, let’s see the possible range of values that can be represented.

For unsigned data types, the smallest possible value is simply 0. The largest value is obtained when all of the bits are set to 1 .

Since we have a sum of all powers of 2 from zero up to w-1 (assuming an array of w-bits), the maximum value is equal to 2w-1. In our example, we had w=4, so the maximum value is 24 -1 = 16 -1 = 15.

For signed data types, we’ll get the minimum value when only the sign-bit is set to 1.

The maximum value is obtained when all but the sign-bit are set to 1.

In general terms, for an array of w-bits using two’s complement encoding, the minimum value will be -2w-1 and the maximum value will be 2w-1-1. See that the maximum value is one less than the magnitude of the minimum value.

These results are summarized below.

| data type | minimum | maximum |

unsigned | 0 | 2w-1 |

signed | -2w-1 | 2w-1 – 1 |

Integer data types in C

In C, we can find the following integer data types:

unsigned char char unsigned short short unsigned int int unsigned long long

While there is a signed keyword, it’s not necessary to use it. Unless it’s otherwise specified using the unsigned keyword, all integer data types are signed by default.

Each one of these data- types uses a varying number of bytes (1 byte = 8 bits). While the size of each data type depends on the machine in which C is implemented, the most common sizes for modern 64-bit machines are:

| data type | size (bytes) | size (bits) |

| char | 1 | 8 |

| short | 2 | 16 |

| int | 4 | 32 |

| long | 8 | 64 |

The size is the same for the signed and unsigned variations of each data-type.

The C specification does not require signed data types to be encoded using two’s complement. While it’s less common, some machines use ones’ complement.

The encoding of the different integer values follows the same principles that we studied above, as can be seen in the demonstration at the beginning of this post.